File:Comparison of rubrics for evaluating inter-rater kappa (and intra-class correlation) coefficients.png - Wikimedia Commons

The Equivalence of Weighted Kappa and the Intraclass Correlation Coefficient as Measures of Reliability - Joseph L. Fleiss, Jacob Cohen, 1973

Cronbach's Alpha, Cohen's kappa Intra Class Correlation Coefficient and... | Download Scientific Diagram

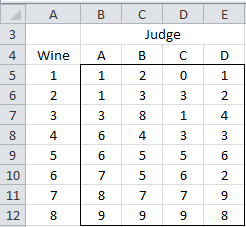

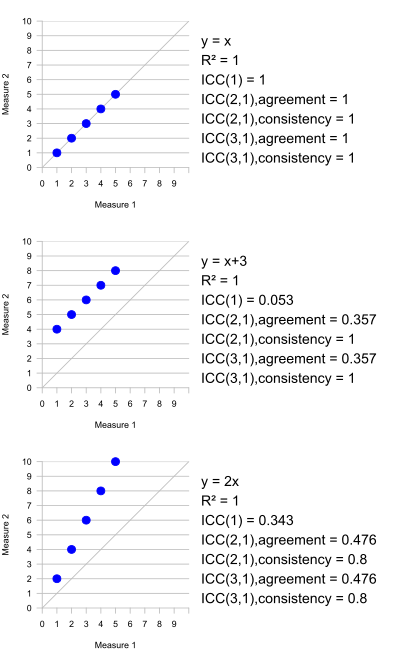

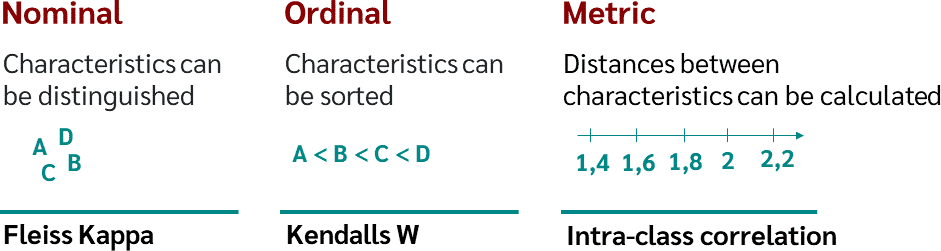

Agreement Measurement (Part 1/2). Inter-rater reliability (Inter-Rater… | by Parin Kittipongdaja | Medium

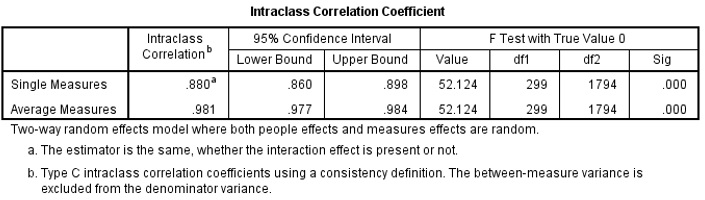

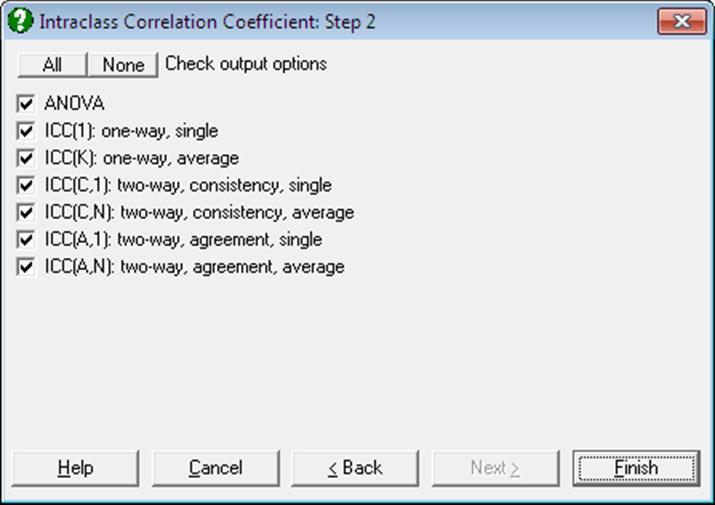

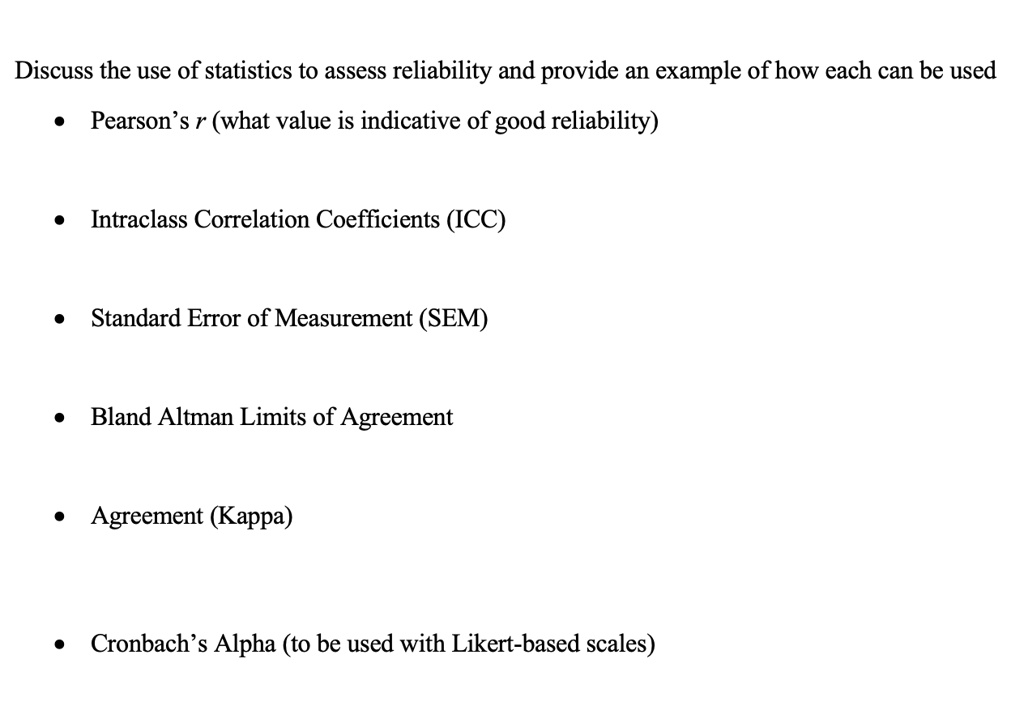

SOLVED: Discuss the use of statistics to assess reliability and provide an example of how each can be used: Pearson's r (what value is indicative of good reliability), Intraclass Correlation Coefficients (ICC),

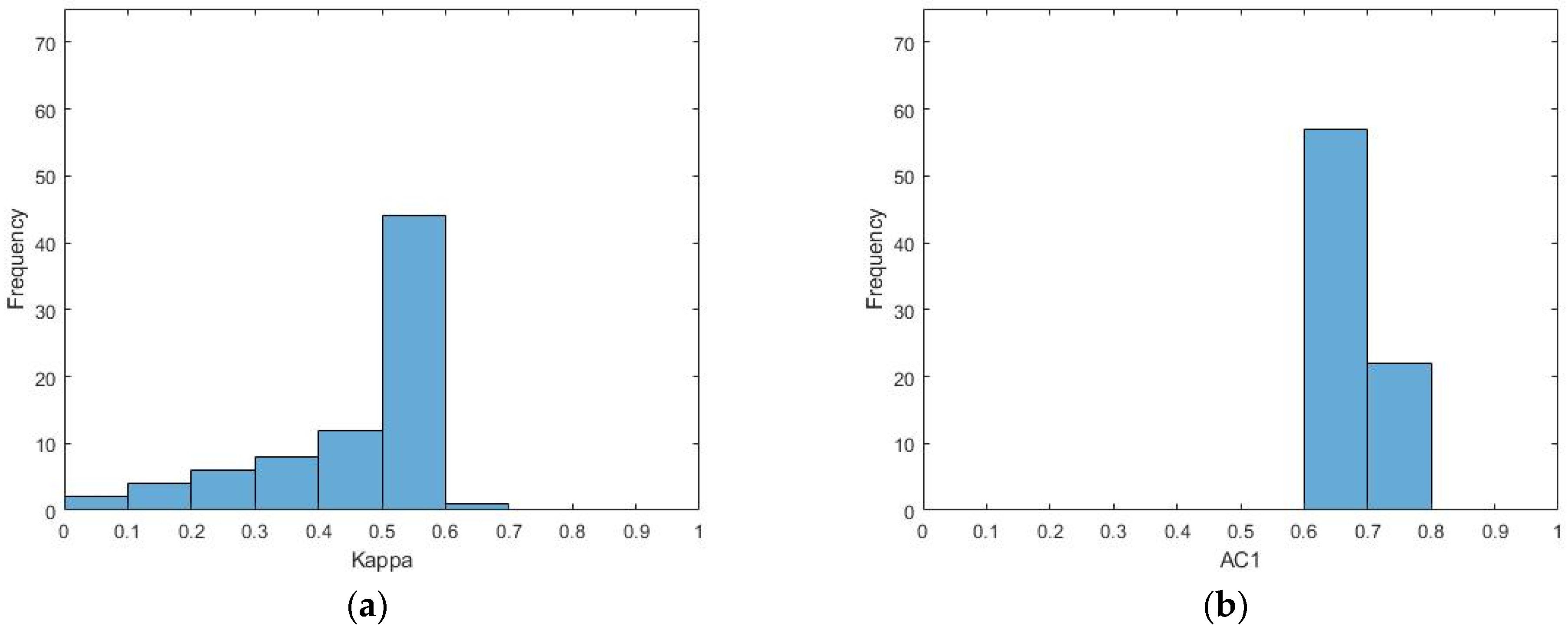

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters